Shared state blocks parallel executions

Two tests update the same customer or order, one assertion fails, and the rerun tells you nothing about the real product risk.

Give every parallel run an isolated, business-valid starting state with pre-generated data, dataset leasing, and cross-service orchestration, so tests start immediately without shared-state collisions.

Instead of replaying full end-to-end chains, tests request exact business-valid states through dataset contracts, orchestration, and just-in-time extensions.

Ask for the exact

business state

Get isolated datasets

without collisions

Start independent tests

without replaying setup

Definition

Parallel test data management gives each test run an isolated, business-valid starting state so parallel execution does not break on shared-state collisions, slow setup chains, or reused datasets.

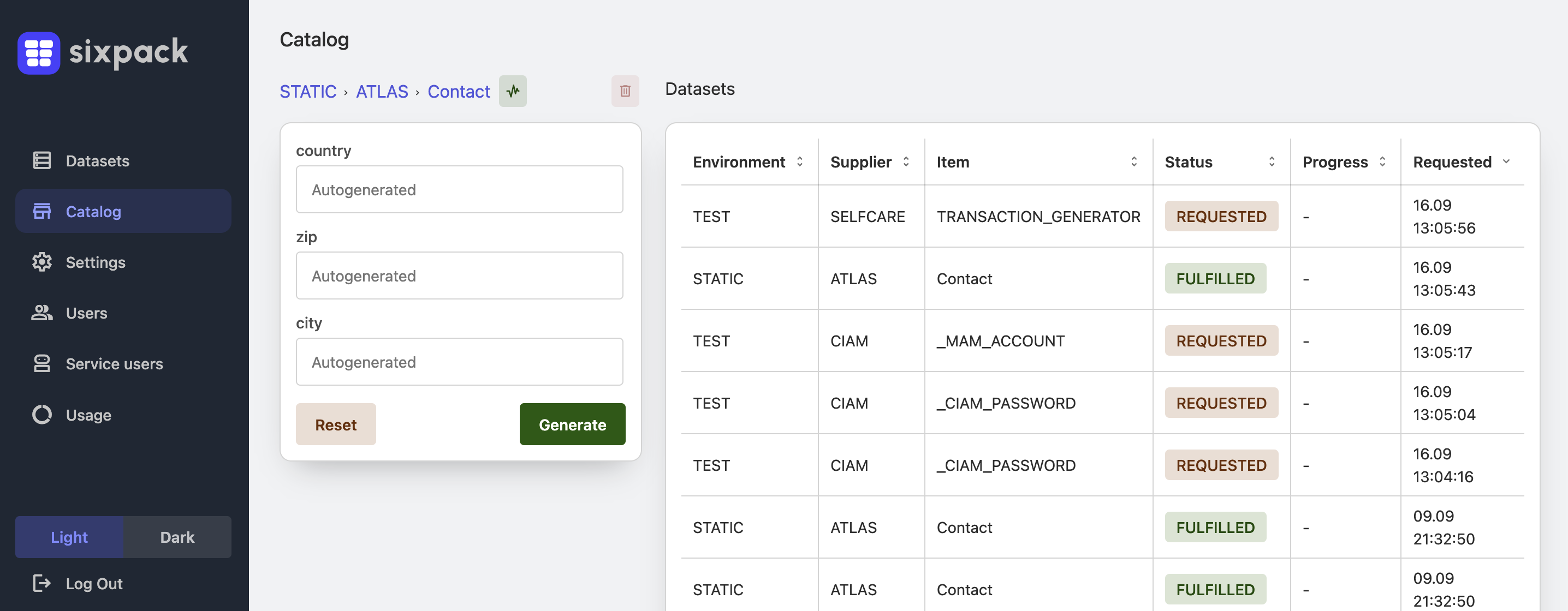

In Sixpack, that means pre-generated state, dataset leasing, and cross-service orchestration delivered on demand through UI or API.

Symptoms it fixes

Modern pipelines run fast, but test data prerequisites still do not scale

Two tests update the same customer or order, one assertion fails, and the rerun tells you nothing about the real product risk.

Jobs fan out in the pipeline, but each scenario still waits for earlier flows to create the required business state.

A meaningful state may require several services, ownership boundaries, and business rules that only another team fully understands.

Productized state-on-demand for teams that need parallel test execution without waiting on test data setup

Sixpack keeps domain knowledge with the teams that own the business logic, but exposes ready-to-use dataset types through UI and REST API, regardless of underlying complexity. Pipelines no longer need to understand the full architecture to start from the correct lifecycle state.

Proven architecture pattern and methodology

Sixpack is built on years of experience solving test data bottlenecks in complex enterprise environments, where parallel tests fail not because execution is slow, but because valid state is hard to prepare, isolate, and reuse safely.

Sixpack allocates or leases dedicated datasets per test run so scenarios stop overwriting each other. What used to feel unrealistic in automation becomes straightforward: each test gets a fresh dataset in the exact requested state.

Tests request the target business state directly instead of replaying long prerequisite flows. Sixpack pre-generates dataset states upfront and matches requests to prepared data so execution can start immediately.

Domain-owned generators compose real business logic across services without pushing that complexity into every pipeline.

Teams and pipelines request ready states through a self-service UI or API, while Sixpack builds that portal automatically from incoming generator capabilities and dataset definitions.

A concrete delivery flow from state request to parallel execution

A pipeline or tester asks for the exact business-valid state needed for the scenario through UI or API instead of rebuilding it from scratch.

Sixpack matches the request to prepared data, allocates a dedicated dataset, and prevents another run from mutating the same entities at the same time.

Execution starts from a ready state, so the suite validates product behavior instead of spending most of its time replaying prerequisite flows.

Two strategies for parallel test data

Layered storage and environment snapshots can restore infrastructure quickly, which helps when a whole stack must return to a known state. The limitation is that every persistence system involved must be snapshotted or virtualized consistently, which becomes quickly challenging in microservice architectures.

Pre-created entities, duplicated lifecycle stages, and just-in-time extensions let tests start from meaningful business states without replaying full flows. In theory, this removes the consistency problem from snapshots and shifts it into the provisioning layer, where states are prepared and matched deliberately.

In distributed systems, the practical model is Test Data as a Service: each team exposes a service that can create or advance business state the same way its system would in production. Parallel execution becomes realistic when pipelines consume and compose those services instead of depending on hidden scripts or manual preparation.

Every domain exposes data creation through an API or generator service owned by the team that understands that part of the business process.

The service creates state by simulating the same business-process steps the system would execute in production, not by bypassing logic with ad hoc inserts.

Pipelines chain these services together to build end-to-end states across microservices without embedding cross-team setup knowledge into every test.

Prepared base states can be extended just in time, so each parallel test receives a valid starting point without waiting for manual setup or validation.

Treating test state as a platform concern improves delivery metrics, not only test setup

Independent tests fail separately instead of collapsing behind one broken setup chain.

Pipelines spend less time constructing state and more time validating product behavior.

Parallel execution becomes operationally safe enough to scale across suites and teams.

Practical outcomes teams see when parallel runs start from dedicated, business-valid state

Instead of multiple runs colliding on one shared customer, order, or account, each run starts from a prepared state that is isolated before execution begins.

The delivery model shifts from replaying long prerequisite flows to requesting a ready state through UI or API, which makes fan-out in CI materially useful rather than only visually parallel.

Pipelines no longer need to encode hidden setup logic from multiple services. The teams that own those services expose the state creation capabilities once, and test suites consume them repeatedly.

Representative scenario

A team running parallel order-to-cash checks can request a customer with the correct lifecycle state, available payment setup, and downstream service alignment before the test starts. The suite validates product behavior immediately instead of spending most of its time constructing data and recovering from shared-state interference.

Parallel execution is only one part of the delivery bottleneck

Sixpack also helps teams provision test data inside pipelines, coordinate consistent states across microservices, replace slow provisioning workflows, and remove cross-team dependencies around test data.

Sixpack

When valid state is always available on demand, testing stops being the hidden scalability ceiling of your release pipeline.